Most robotic manipulation planners assume they already know the exact state of the world – where every object is, which bin it’s in, its precise pose. In practice, sensors are noisy, depth cameras lie, and state estimation is rarely exact. When a planner ignores that uncertainty, you get missed grasps, wrong bins, and failed picks. This project builds a motion planner that operates in belief space – it doesn’t just plan paths through physical space, it plans motions that actively reduce the robot’s uncertainty before committing to a grasp.

I built this as a final project for MIT 6.4212 (Robotic Manipulation) with Thiago Veloso. We called the approach “Search-Then-Commit”: the Kuka iiwa arm first searches for information to resolve its uncertainty, then commits to the grasp only once it’s confident enough. The planner is built on the Rapidly-exploring Random Belief Tree (RRBT) framework, implemented in Drake.

The Setup

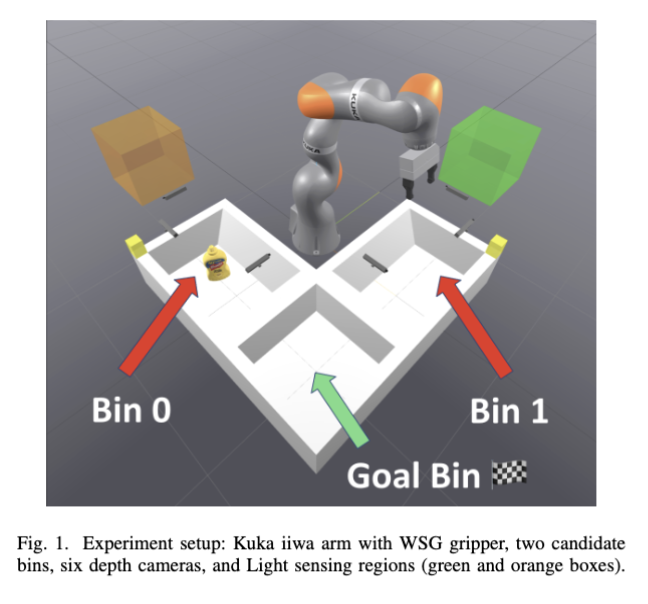

The scenario: a mustard bottle is hiding in one of two bins, and the robot doesn’t know which one. Six depth cameras are watching the scene, but they’re noisy – outside of certain “sweet spot” zones (the green and orange boxes in the image), the sensor data is basically useless. The robot’s job is to pick up the bottle and place it in the goal bin on the right.

A naive planner would just guess a bin, plan a path, and go for the grasp. If it guesses wrong – which happens about half the time – that’s a failed pick. Our planner is smarter than that.

How It Works

The robot’s strategy has two phases, both driven by uncertainty reduction:

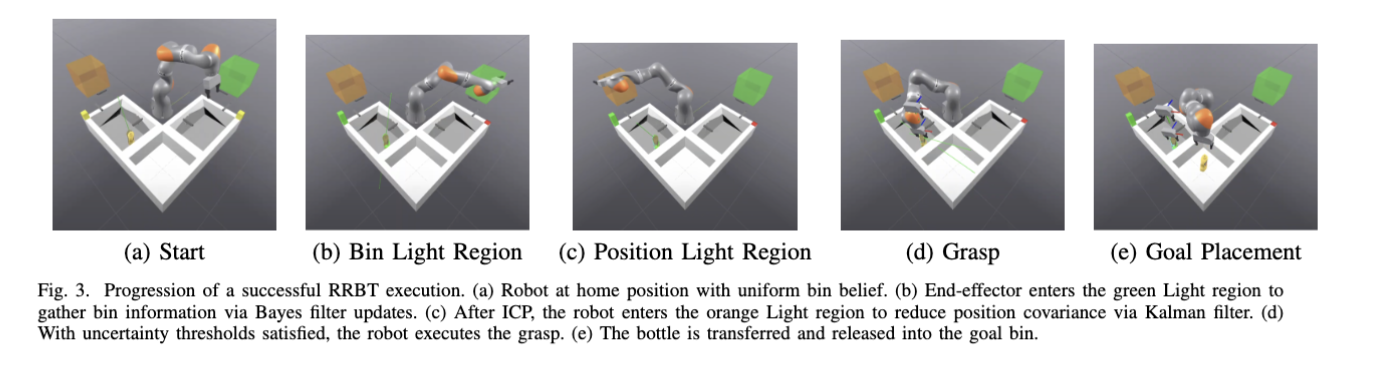

Phase 1 – “Which bin is it in?” The robot moves its end-effector into the green sensing zone, where the cameras give reliable readings. By collecting a few good observations, it runs a filter to figure out which bin actually contains the object. No more coin-flip guessing.

Phase 2 – “Where exactly is it?” Once the robot knows the right bin, it still doesn’t know the precise XY position of the bottle (sensor noise, remember). So it moves to the orange sensing zone to collect high-fidelity position data and shrink its uncertainty down to a tight estimate. Only then does it commit to a grasp trajectory.

The key insight: the planner treats uncertainty as a first-class citizen. It doesn’t just plan paths through physical space – it plans paths through belief space, choosing motions that actively reduce what the robot doesn’t know.

Step by Step

The progression above shows the full pipeline in action. The robot starts at home with a 50/50 belief about which bin the object is in, actively gathers information in two phases, and only commits to a grasp once its uncertainty is low enough. Clean and deliberate.

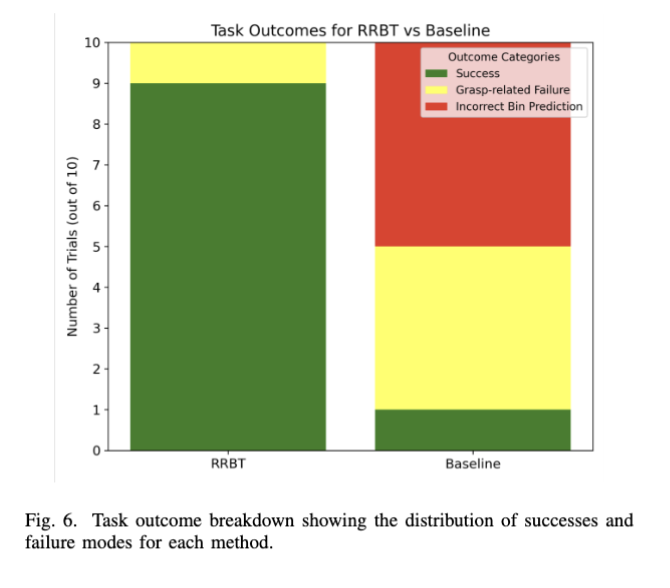

Results

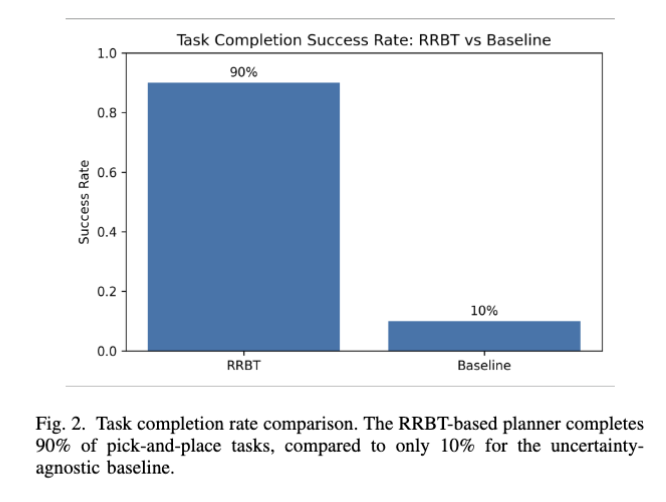

The numbers speak for themselves. Our planner completed the pick-and-place task 9 out of 10 times. The baseline planner that doesn’t account for uncertainty? 1 out of 10. The baseline’s main failure mode was picking the wrong bin entirely – something our planner completely eliminates by actively sensing first.